Every year, credentialing programs across industries issue millions of digital badges and certificates, collect engagement data, and report back to stakeholders on how the program is performing. Completions are up. Share rates are strong. Learner satisfaction is high.

And then someone in leadership asks the question that changes everything: "But what did it actually change?"

Consider two programs. Both issue thousands of credentials in the same year. One walks into a quarterly business review with a slide showing credential volume, completion rates, and a learner satisfaction score. The room nods and moves on.

The other shows that new hires now reach full productivity up to 45 days faster than before the program launched, with specific timelines by role and domain. Or that credentialed partners generated $36 million in pipeline last quarter, with deal sizes running 2.3 times higher than non-credentialed peers.

That room leans in.

The difference between those two programs isn't the platform, the team size, or the budget. It's that the second set of teams — Toast and Elastic — started by asking a business question before they built anything: What does our organization need this program to prove, and to whom? Then they designed measurement to answer it.

That sequencing — business question first, measurement second — is what separates programs that earn sustained executive attention from those that don't. And it's a decision any program can make, at any scale, starting now.

The measurement gap in most credentialing programs isn't laziness or lack of ambition. It's structural, and it has three roots.

First, credentials are typically treated as outputs rather than infrastructure. The program's job is to issue them. What happens after issuance is someone else's problem, or nobody's problem, or a problem deferred until budget season arrives and someone needs a justification.

Second, learning systems are siloed from business systems. The LMS tracks completions. The CRM tracks revenue. The HRIS tracks retention. Nobody has connected the dots, and the people running credentialing programs rarely have the organizational access — or the mandate — to do it.

Third, program leaders are rarely aligned with executive KPIs. They're measured on participation, satisfaction, and issuance volume. Those are the metrics their platforms surface. Those are the metrics they report. And as David Leaser, who architected IBM's award-winning badge program, puts it: “Credentialing grew out of a compliance era, where the question was never ‘did this change anything?’ It was ‘did everyone complete it?’"

The expectations have shifted. The defaults haven't.

According to the 2025 State of Credentialing report, only 41% of credential issuers track learner outcomes in any form. The rest are operating entirely on activity and engagement signals — useful for running the program, insufficient for defending it.

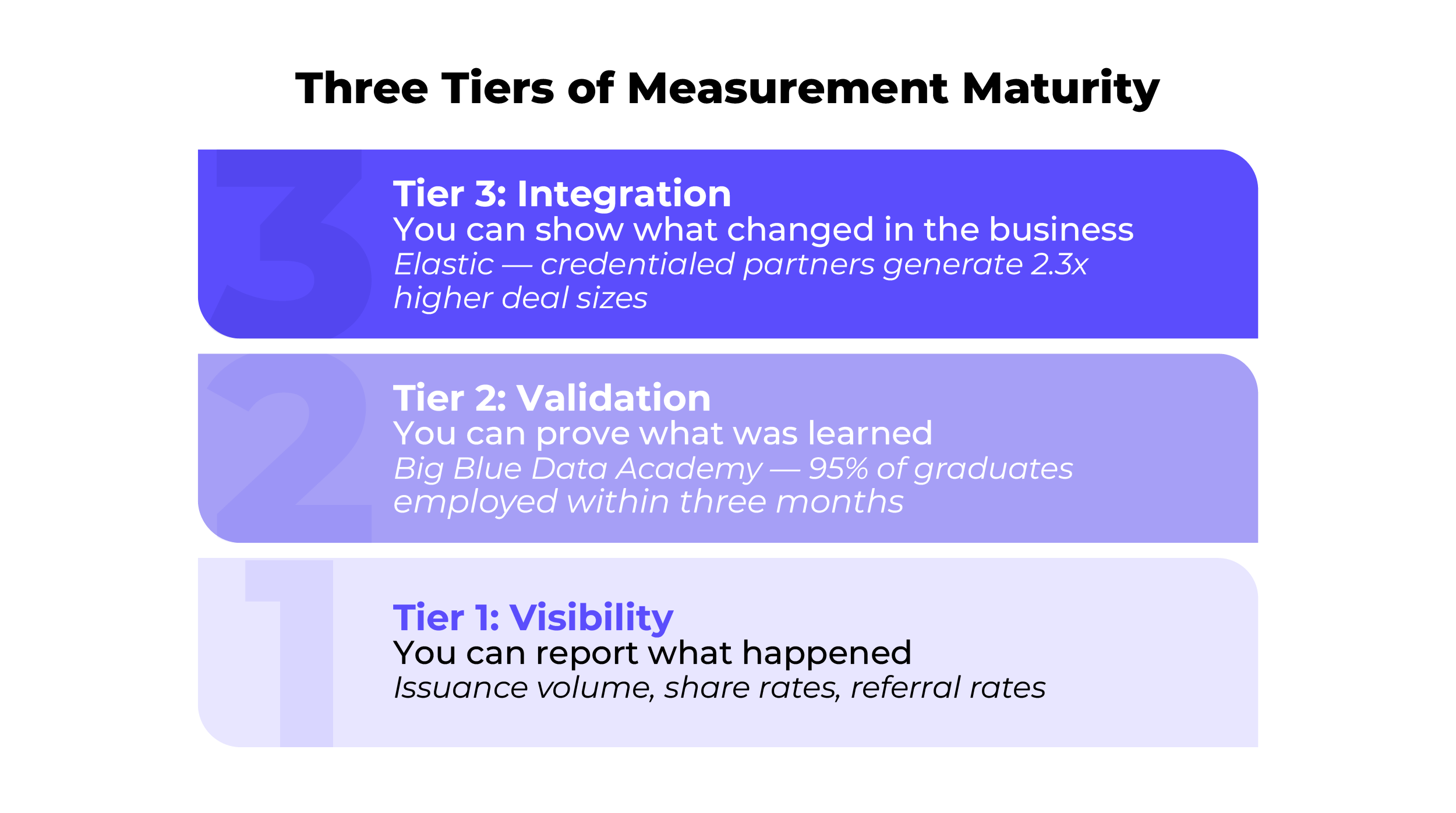

The result is that most credentialing programs live entirely within what we'd call the visibility tier — they can tell you what happened, but not what changed. And a program that can only report what happened will always be a marketing or operations function. It will never be a strategic lever. That distinction matters most when budgets tighten, leadership changes, or someone asks the question that changes everything.

Frameworks for measuring learning effectiveness have existed for decades — Kirkpatrick's four levels being the most cited. But those frameworks measure training effectiveness within a program. What we're describing is a different problem: how credential data connects across systems to outcomes that exist outside the learning environment entirely. That's not a training effectiveness question. It's a data infrastructure question.

With that distinction in mind, the programs that consistently earn executive attention tend to operate at one of three tiers.

This is the default state of most credentialing programs. You know how many credentials you issued, who shared them, which channels they were shared to, what your satisfaction scores look like, and which earners drove the most referrals. You can answer "what happened." You cannot yet answer "what changed."

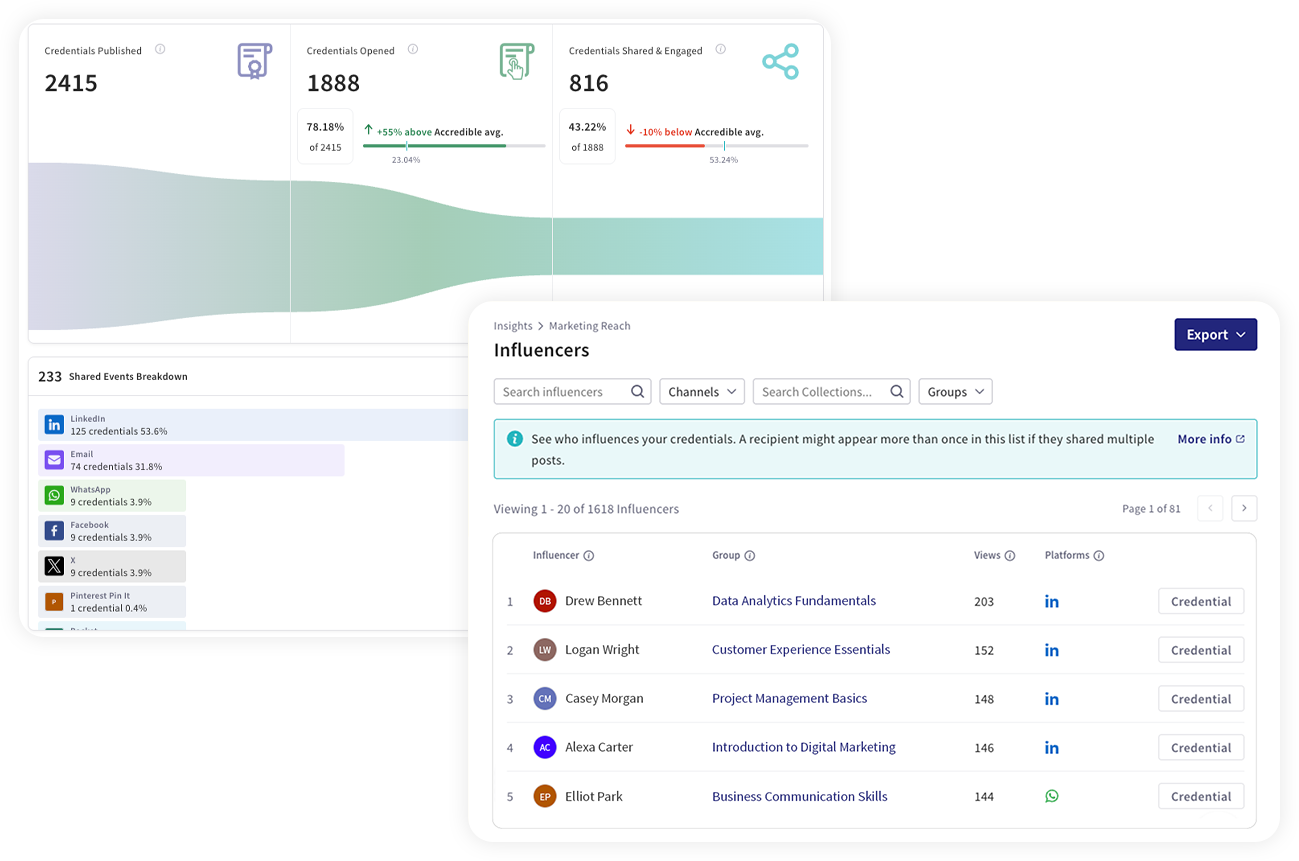

The data in this tier is more valuable than most programs realize. Credential referral rates — tracking how many people visit your program pages after clicking a shared credential — are already audience development data. Every referral is a potential new learner or customer. Top credential influencers — the earners whose shares are driving the most views back to your credential pages — are your organic marketing engine. Sharing channel breakdown tells you where the program has genuine, real-world reach. These signals sit inside most programs' analytics dashboards right now, largely unread by anyone with budget authority.

The programs that move up the maturity ladder don't necessarily start by building new infrastructure. They start by learning to read what they already have, and reporting it in language that connects to outcomes their stakeholders care about.

At this tier, credentials are tied to demonstrated competence rather than participation. The curriculum is job-aligned. Assessments require real application. The credential represents something specific that a learner can do, not just something they attended.

Big Blue Data Academy built its entire program around a single validation standard: Graduates needed to be employment-ready within three months. Every design decision — the active learning framework, the hands-on projects, the GitHub portfolio, the Accredible digital credentials issued for each core skill — was engineered to produce that outcome. Over 95% of graduates are employed as data analysts within three months. That number exists because the program was designed to produce it, not because someone went looking for a proof point after the fact.

Toast, a restaurant technology platform, defined role-based proficiency milestones for its customer-facing new hires before writing a single piece of curriculum. Before the program, new hires needed roughly 90 days to reach full productivity. By codifying what 'good' looks like by role and domain — and building credentials around those definitions — Toast now has specific, reportable timelines: Money leads — the finance-focused onboarding role — reach productivity in 41 days, proficiency in 48 days. Kitchen and Menu teams average 35 days to enablement. These aren't engagement metrics. They're operational benchmarks a COO or head of customer success can act on.

This is where learning data becomes business data. Credential completion connects to CRM records, HR cohorts, revenue reporting, or employment tracking. Outcomes are observable and comparable — not self-reported or anecdotal.

Elastic, the search and observability company, built this integration before their program launched. Seismic became the hub for partner learning, triggering Accredible to automatically issue digital credentials upon course completion. Salesforce captured those completions, tagging credentialed partner contacts to deals and pipeline records. The partner portal gave teams real-time visibility into enablement data by region. The result: $36 million in Q1 FY26 pipeline from credentialed partners (up 47% year over year), with credentialed partners generating 2.3 times higher average deal sizes than non-credentialed peers. None of this required custom analytics work after the fact. It required one integration decision made before launch.

A professional education program at a major research university made a simpler version of the same decision. When they launched their digital credential program, they added UTM tracking (simple tags that identify where website traffic originates) to their credential page links and pulled Accredible engagement data into Salesforce from day one — attributing net-new leads back to credential shares. The result: a 127% credential referral rate — meaning every shared credential generates more than one referral back to program pages — and reenrollment growing from 35% to over 50%. Neither metric required new infrastructure. Both required a tracking decision made at setup.

Across these programs, four behaviors separate the programs that reach the integration tier from those that don't.

Leaders in this space answer three questions before launching anything: What behavior should change? Within what timeframe? In which system will that change be visible? Big Blue Data Academy defined a three-month employment target before designing the bootcamp. Elastic defined partner revenue acceleration before selecting their tech stack. Toast defined productivity milestones before writing curriculum. Asana, a work management platform, grounded their entire program in three strategic pillars — customer adoption, thought leadership, and demand generation — before issuing a single credential, and embedded a campaigns manager in the launch team specifically to wire up attribution systems from day one.

Most programs skip this step entirely. They define outcomes after the data exists, which means the data trail that would have supported a business case was never built.

There's an important distinction here. Most programs treat system integration as a technical project — something that requires IT involvement, development resources, and a dedicated implementation timeline. The programs at the integration tier tend to treat it as a design decision: before we launch, what do we need to tag, track, and connect so that the outcome we care about is measurable?

For Elastic, that meant a Salesforce integration built before the program went live. For the university program, it meant UTM parameters added to credential page links before the first credentials were issued. In both cases, the "integration" was less technically complex than it sounds. It was primarily a decision about what to connect, made early enough to matter.

David Leaser describes this as finding the "Monday morning problems" — the things keeping your VP or CFO up at night, the metrics they're accountable for, the questions they're being asked by their own leadership. Credential volume and share rates don't appear on that list. Pipeline velocity, retention delta, time-to-productivity, and employment placement rates do.

The programs that earn strategic investment translate their work into that language consistently. They don't lead with badge counts. They lead with what changed in the business, and they bring the credential data as evidence for that change, not as the headline.

This is the move that most distinguishes intellectually credible programs from those that overstate their impact. None of the examples in this piece prove that credentials caused their outcomes. Credentialed partners may already be more motivated. Learners who reenroll may be inherently more engaged. Toast's faster ramp times likely reflect curriculum quality as much as credentialing specifically.

The programs that earn the most durable executive trust are the ones that say so, clearly and without apology. "Credential density in our partner territories correlates with pipeline velocity. That pattern has held for three consecutive quarters. We're not claiming badges close deals — we're saying the data is consistent enough to warrant continued investment." That's a sentence a CFO will remember. It's also a sentence they'll trust, which is more valuable than any overclaimed causal chain.

As Leaser puts it: "A lot of it's correlational. But frankly, that's enough to show the value."

Most credentialing programs don't reach the integration tier because of five decisions that weren't made early enough or weren't made at all. No executive KPI alignment, so the program reports in learning language to a business audience. No system integration, so credential data lives in the platform and never connects to where outcomes are measured. No defined post-completion outcome window, so there's no way to evaluate movement even when the data exists. No cross-functional ownership, so nobody outside the learning team feels accountable for what the program produces. And no habit of reading cross-system data, so the signals that already exist — referral rates, repeat earner patterns — never make it into a business conversation.

These aren't permanent barriers. They're the five decisions that, made deliberately and early, determine whether a program can ever prove its consequence. The good news is that none of them require a large team, a technical build, or executive sponsorship to start. Most of them require a conversation.

The most common mistake programs make when they decide to improve their measurement is assuming they need to build something new. Most don't. They need to start reading what they already have.

If you're issuing credentials with a digital badging platform like Accredible, you already have data that most programs don't report upward: credential referral rates, top sharing channels, which earners are driving the most visibility for your program, how many net-new visitors credential shares are generating, and which earners are coming back for additional credentials.

These are not engagement metrics in the way that badge views or CSAT scores are engagement metrics. They are signals about program reach, learner behavior, and audience development, and they connect directly to questions your marketing, revenue, or enrollment leadership is already asking.

Start there. Pull that data. Put it in a slide with context. Show it to one stakeholder who owns an outcome your program is adjacent to. The conversation that follows will tell you what to build next.

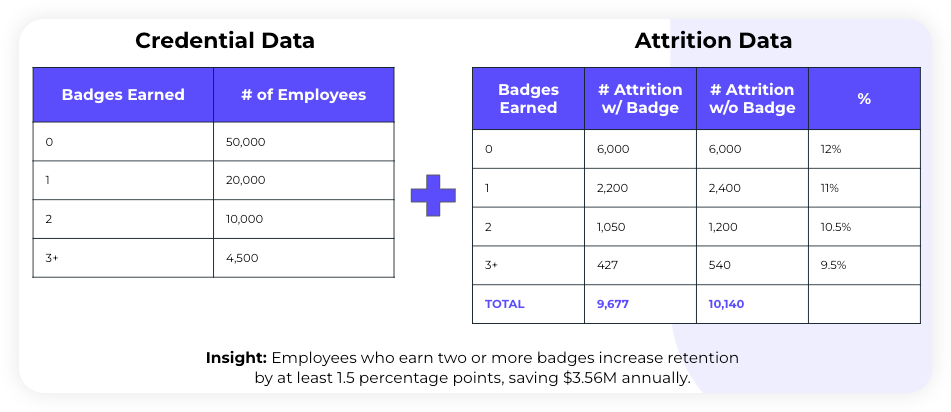

Here's what that looks like in practice. At IBM, David Leaser's team combined credential data with attrition data to produce a single insight that translated directly into dollar value for leadership.

Use reenrollment rate or credential referral rate as a proxy business signal and build a simple cohort comparison — credential earners vs. non-earners on whatever outcome metric you have access to.

The university program referenced earlier built its reenrollment story before it had full CRM integration, then used that story to justify the deeper attribution build. You don't need the full system to start the conversation. You need enough signal to earn the next investment.

Automate post-completion survey delivery so it reaches learners six to twelve months after credential issuance — long enough for outcomes to emerge, consistent enough to build a dataset over time.

LHH, a global talent solutions and career transition firm, launched a structured credentialing program for its global consultant network and measured a 5% rise in candidate satisfaction scores within one calendar year. That's a modest number reported with honest framing, and it shifted a conversation from cost to value. Imperfect data, consistently collected, compounds over time.

Start with one conversation. Find the executive in your organization who owns the outcome your program is closest to — revenue, retention, readiness, employment — and ask: "What would it take for you to see this program as a driver of that outcome?" The answer to that question is your measurement roadmap.

Asana found their cross-functional partner in a campaigns manager who had seen certification drive pipeline and revenue at a previous company. That relationship unlocked the attribution infrastructure that made the 6x Asana Academy lead conversion result reportable.

The starting point is never the system. It's the question.

Digital credentials issued for recognition generate visibility. Credentials integrated into business systems generate intelligence. The organizations featured here — Asana, Elastic, Toast, Big Blue Data Academy, and others — made one early, deliberate choice: to treat credentialing as infrastructure rather than recognition. That choice made measurement possible. And measurement is what made their programs defensible, fundable, and worth building.

The maturity ladder doesn't require starting at the top. It requires knowing where you are, deciding where you want to go, and making the next decision — about what to track, what to connect, what to report — with that destination in mind.

Every program has a consequence worth proving. The question is whether you've built the path to prove it.

Want to build a measurement strategy for your program? Our team can help you identify the right starting point — whether you're at Tier 1 or working toward integration. Talk to our team →

Schedule a demo and discover how Accredible can help you attract and reward learners, visualize learning journeys, and grow your program.

Book a demo